Cloud repatriation: when moving workloads back on premises is a strategic choice, not a retreat

“The cloud is just someone else’s computer.” The old sysadmin joke has held up better than many forecasts from the last decade. After years of cloud-first mandates, digital transformation roadmaps, and hyperscaler marketing, more companies are taking a second look at where their workloads actually belong.

Not out of nostalgia, but because the industry has matured enough to recognize that “cloud-always” was never more rational than “on-premises-always.” In Europe, the reassessment has an added layer: data sovereignty, regulatory compliance, and a growing unease about relying on infrastructure that may not be fully shielded from foreign government access.

The numbers are hard to ignore

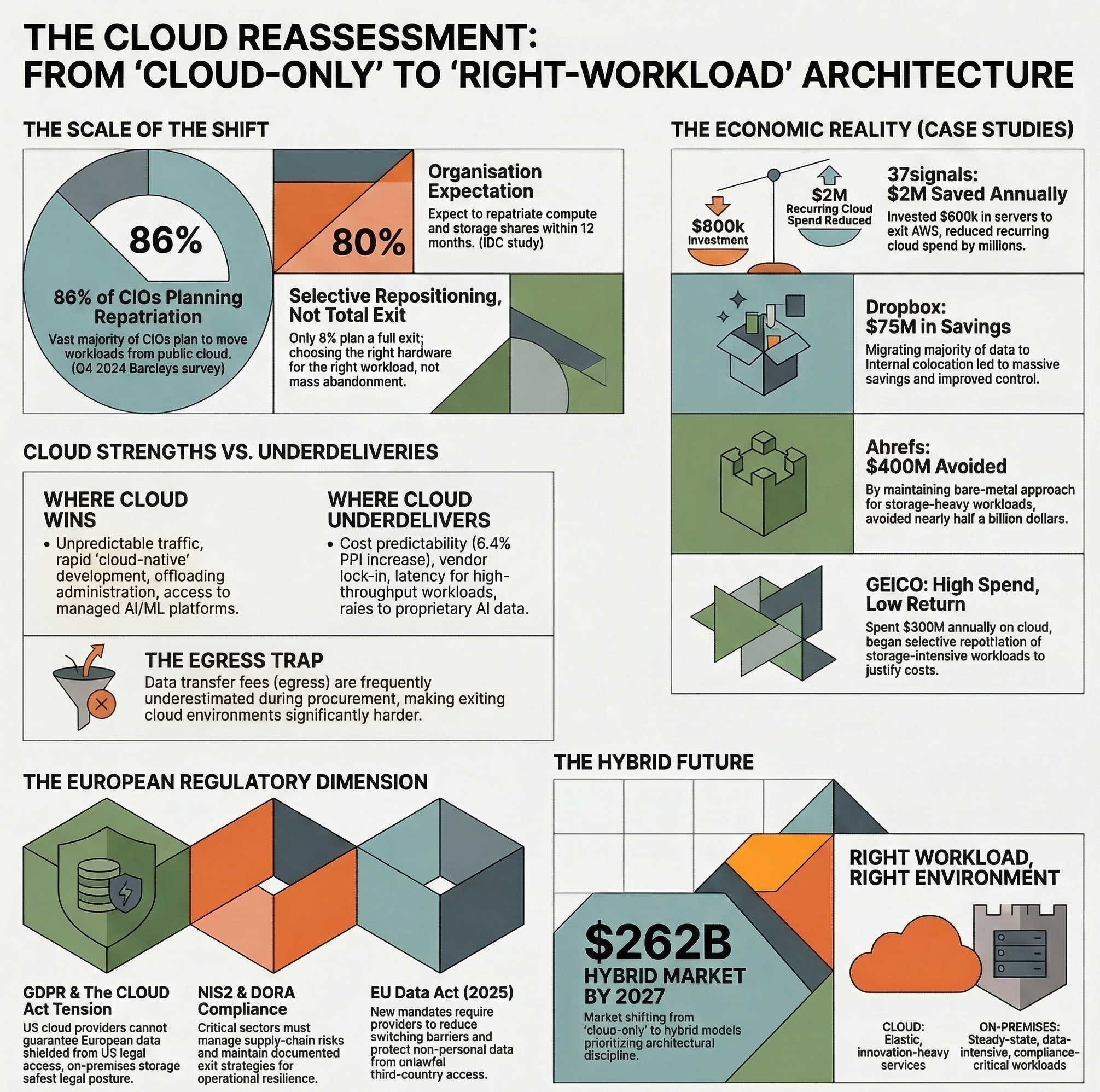

The scale of the shift is difficult to dismiss. According to a Barclays CIO Survey from Q4 2024, 86% of CIOs planned to move some workloads from public cloud to private or on-premises environments, the highest figure the survey had recorded. An IDC study from the same year found that roughly 80% of organizations expected to repatriate a share of compute and storage within the following twelve months.

Only 8% were planning a full exit. Nobody is shutting down their AWS accounts en masse; what’s happening is a selective repositioning, driven by a question that should probably have been asked much earlier: which workloads genuinely benefit from cloud, and which ones are just paying a premium for someone else’s hardware?

Case studies: when the bill finally arrived

37signals: $2 million saved per year, and counting

If the cloud repatriation movement has a poster child, it’s David Heinemeier Hansson (DHH), CTO of 37signals, the company behind Basecamp and HEY. After finding that the company was spending more than $3.2 million per year on AWS, DHH argued for a radical shift. The company invested roughly $600k in Dell servers and cut its compute bill by about $1.5 million to $2 million a year.

But that was the compute side. In later write-ups, 37signals documented the migration of billions of files off S3 and the operational model behind 10 petabytes of data in Pure Storage. The specifics of later savings are harder to pin to a single primary source, but the trajectory is clear: less recurring cloud spend, more direct control.

As DHH put it: “Cloud can be a good choice in certain circumstances, but the industry pulled a fast one convincing everyone it’s the only way.”

Dropbox: the original repatriator

Dropbox was doing cloud repatriation before anyone called it that. Between 2013 and 2016, the company migrated the vast majority of its data from AWS to proprietary colocation facilities via an internal project codenamed “Magic Pocket”. According to later technical reporting, that move led to nearly $75 million in savings over two years, along with dramatically improved control over the storage stack.

GEICO: $300M a year with little visible return

In 2013, GEICO began migrating more than 600 applications to the cloud. By 2021, it was reportedly spending over $300 million annually on cloud services, and internal stakeholders were struggling to justify the expense. Trade-press coverage of the case emphasized a now-familiar problem: for data-heavy estates, cloud storage costs can dominate the bill surprisingly quickly. GEICO subsequently began a selective repatriation of its most storage-intensive workloads.

Ahrefs: the math that changed everything

Ahrefs laid out the arithmetic in public: in a technical write-up, the company claimed its bare-metal approach had avoided roughly $400 million in cloud costs. The pattern running through all these examples is the same: once workloads become large, steady, and storage-heavy, owning your hardware starts to look very different from renting it.

A more honest accounting of cloud pros and cons

Cloud was not a mistake. But it is a tool, not a destiny, and like every tool it works well in some contexts and poorly in others.

What cloud genuinely does well

For workloads with unpredictable traffic (consumer apps, seasonal e-commerce, early-stage startups), the ability to scale on demand without upfront CapEx is hard to beat. You pay for what you use, when you use it.

Cloud-native development (managed Kubernetes, CI/CD pipelines, Infrastructure-as-Code) can dramatically accelerate delivery cycles. Provisioning environments in minutes rather than weeks is a genuine productivity gain for development teams.

Offloading database administration, backups, patch cycles, and hardware lifecycle to a provider creates real savings for organizations without dedicated infrastructure teams. Not every company can run its own data center, and not every company should.

Multi-region failover, global CDN, and built-in disaster recovery that would cost millions to replicate on premises come standard with every major cloud provider.

Managed AI/ML platforms, petabyte-scale data warehouses, and globally distributed databases are extraordinarily hard to build in-house. For many organizations, cloud remains the only practical path to these capabilities.

Where cloud consistently underdelivers

Cost predictability

The Producer Price Index for cloud computing services rose by 6.4% between September 2023 and May 2024. Cloud pricing is not magically trending toward zero. For stable, predictable workloads, the pay-as-you-go model is often more expensive than owned hardware over a 3-5 year horizon. Egress fees (what you pay to move data out of the cloud) are especially easy to underestimate during procurement.

Vendor lock-in

Migrating into cloud is frictionless by design. Migrating out of cloud, or between providers, is significantly harder. Proprietary APIs, managed service dependencies, data format lock-in, and pricing structures tied to data transfer all create a gravitational pull that makes exit far more expensive than the initial architecture review ever anticipated.

Performance for high-throughput, low-latency workloads

For AI/ML training on large proprietary datasets, real-time industrial IoT processing, or high-frequency financial analytics, the shared nature of cloud infrastructure introduces variance and latency that dedicated hardware simply does not. For consistent, predictable workloads, on-premises infrastructure can offer better and more stable performance at a fraction of the long-term cost.

Security posture and control

The shared responsibility model gets a lot of airtime, but in practice it is frequently misunderstood. According to Palo Alto Networks’ State of Cloud Security Report 2025, 53% of organizations identify lax IAM practices as a top challenge and a leading vector for data exfiltration. The Verizon 2025 DBIR found that 30% of breaches now involve third-party components, double the previous year’s figure, a finding that maps directly to cloud supply-chain risk.

The most instructive case is probably Capital One’s 2019 breach. A misconfigured web application firewall on AWS allowed an attacker to exploit the cloud metadata service via SSRF and access over 106 million customer records, including Social Security numbers and bank account details. Amazon’s response was that the vulnerability lay in Capital One’s application layer, not in AWS itself. That distinction is the shared responsibility model in a nutshell: the provider secures the infrastructure, the customer secures everything running on top of it. In practice, the boundary is blurry enough that even large, well-funded security teams can get it wrong.

On-premises environments allow security teams to implement least-privilege at the hardware level, maintain audit trails end to end, and respond to incidents without waiting on a provider’s tooling or disclosure timelines. Repatriating organizations consistently report improved visibility as a secondary benefit. That said, on-prem security is not free: it requires dedicated staff, continuous patching, physical controls, and the discipline to maintain what you now fully own.

AI on proprietary data

Companies fine-tuning large language models or building domain-specific AI on confidential internal data have a strong incentive to keep that work off shared infrastructure. Even if the probability of data leakage is low, the residual risk is often incompatible with IP protection requirements in many sectors. This is becoming one of the fastest-growing reasons to invest in on-premises or private cloud infrastructure.

The security trade-off: no free lunch either way

Repatriation is often framed as a security win, and in many respects it can be. But it would be dishonest to pretend that running your own infrastructure is inherently safer. The real picture is more nuanced.

Cloud providers do some things exceptionally well. The hyperscalers invest billions in physical security, DDoS mitigation, encryption at rest and in transit, and global threat intelligence. Most organizations cannot match that depth of investment on their own. Managed security services (SIEM, SOAR, threat detection) are mature, widely available, and improving rapidly.

The problem is what sits on top. Misconfigurations, overly permissive IAM roles, exposed storage buckets, unrotated secrets, forgotten API keys: these are not infrastructure failures, they are application- and configuration-layer mistakes that happen at the customer level. The CSA Top Threats to Cloud Computing report consistently ranks misconfiguration and inadequate identity management among the top risks. The IBM Cost of a Data Breach Report, published annually with the Ponemon Institute, continues to show that breaches involving cloud environments tend to cost more and take longer to contain than those in purely on-premises estates.

On-premises security gives you full control, but demands the staff to exercise it. Patching cycles, firewall rule management, physical access controls, backup testing, log retention, and 24/7 monitoring all need people. For organizations with experienced security operations teams, that control translates into better posture. For organizations that repatriate workloads without scaling their SecOps capability accordingly, the outcome can be worse than the cloud setup they left behind.

Hybrid architectures create the widest attack surface. This is the part that rarely gets enough attention. An estate split across on-premises, private cloud, and one or more public providers multiplies the number of identity boundaries, network perimeters, and configuration standards that need to be maintained in parallel. Consistent policy enforcement, centralized logging, and unified incident response across all environments require serious tooling and discipline.

The honest conclusion: moving workloads on premises can improve your security posture, but only if you invest in the operational capability to manage it. Repatriation is not a security strategy by itself.

The European dimension: sovereignty is not optional

For European organizations, the calculus goes beyond cost. Cloud repatriation is increasingly a matter of legal and regulatory necessity.

GDPR: the baseline

The General Data Protection Regulation established strict rules on personal data processing, cross-border transfers, and data subject rights. The Schrems II ruling invalidated the Privacy Shield framework and forced European regulators to look much more closely at US-based cloud providers. The EU-US Data Privacy Framework, adopted in 2023, provides a new legal basis for transfers, but it is still viewed by many practitioners as politically fragile. For that reason, keeping personal data within EU borders remains the easiest posture to defend.

The CLOUD Act: the elephant in the room

In 2025, a point that legal analysts had raised for years became harder to dismiss in public debate: US cloud providers cannot offer an absolute guarantee that European data will never be reachable through US legal mechanisms. The CLOUD Act is central to that debate. In testimony before the French Senate, Microsoft France’s general manager stated under oath that he could not guarantee French citizens’ data was protected from US authority access. Google, Amazon, and Salesforce have made similar acknowledgments in other contexts. That tension between the CLOUD Act and European data-protection expectations is shaping infrastructure decisions in both the public and private sectors.

NIS2: cybersecurity supply-chain obligations

The NIS2 Directive, which entered into force in January 2023 and had to be transposed by member states by 17 October 2024, explicitly requires organizations in critical sectors to assess and manage cybersecurity risks introduced by their supply chains, including cloud service providers. In practice, this creates a formal obligation to evaluate concentration risk and, in some cases, to maintain control over critical infrastructure components. The ENISA Threat Landscape, updated annually, provides the European reference framework for these risk assessments and consistently highlights supply-chain attacks and cloud-infrastructure threats among the top concerns. Regulators increasingly expect documented evidence that cloud dependencies have been assessed and that alternatives exist.

DORA: exit strategies for financial services

The Digital Operational Resilience Act entered into force in 2023 and has applied since 17 January 2025. It requires financial institutions to demonstrate that they can continue operating through severe disruption involving major technology providers. That means maintaining documented exit strategies and preserving business continuity even when a critical ICT provider becomes unavailable. For banks, insurers, and investment firms, this has accelerated investment in hybrid architectures with a credible on-premises fallback layer.

EU Data Act: the newest layer

The EU Data Act entered into force on 11 January 2024 and has applied since 12 September 2025. Among other things, it requires providers of data-processing services to reduce barriers to switching and to take legal, technical, and organizational measures against unlawful third-country access to non-personal data held in the EU. That does not eliminate lock-in overnight, but it does make provider switching and eventual exit easier than before.

Taken together, these regulations are reshaping the European compliance environment. Cloud-only architectures face increasing scrutiny, and the ability to demonstrate local control over data is becoming both a competitive and a legal differentiator.

Cloud-also, not cloud-only

The real problem is that “cloud-first” quietly became “cloud-always”, and many organizations are now paying for that simplification in ways they never fully modeled up front.

The market itself has absorbed this lesson. The hybrid cloud market was valued at approximately $85 billion in 2022 and is projected to reach $262 billion by 2027. Almost no organization repatriating workloads today is abandoning cloud entirely. They are building architectures that put each workload where it belongs. Cloud for elastic, innovation-heavy, globally distributed services. On-premises or private cloud for steady-state, data-intensive, compliance-critical, and proprietary-AI workloads.

The better framework is simpler than it sounds: right workload, right environment. It takes more architectural discipline up front, but it produces better outcomes over time.

For European organizations, it goes beyond good engineering. Under GDPR, NIS2, DORA, and the EU Data Act, it may be the only defensible position left.