When the city becomes the weapon: IoT, AI, and the new face of warfare

There is a quiet assumption most of us carry around about the devices that fill our cities. Traffic cameras sit on their poles to catch speeding drivers. Smart sensors monitor air quality for public health dashboards. Cellular towers connect us to family and work. These things feel mundane, civic, even boring. They are infrastructure, not weapons. And yet the events that unfolded in Tehran in late February 2026 tore that assumption apart with a precision that no missile alone could have achieved. What happened in Iran was not simply a military strike. It was a demonstration, brutal and technically remarkable, that the digital nervous system of a modern city is simultaneously its greatest vulnerability, and a perfectly functional targeting platform for anyone patient enough to exploit it.

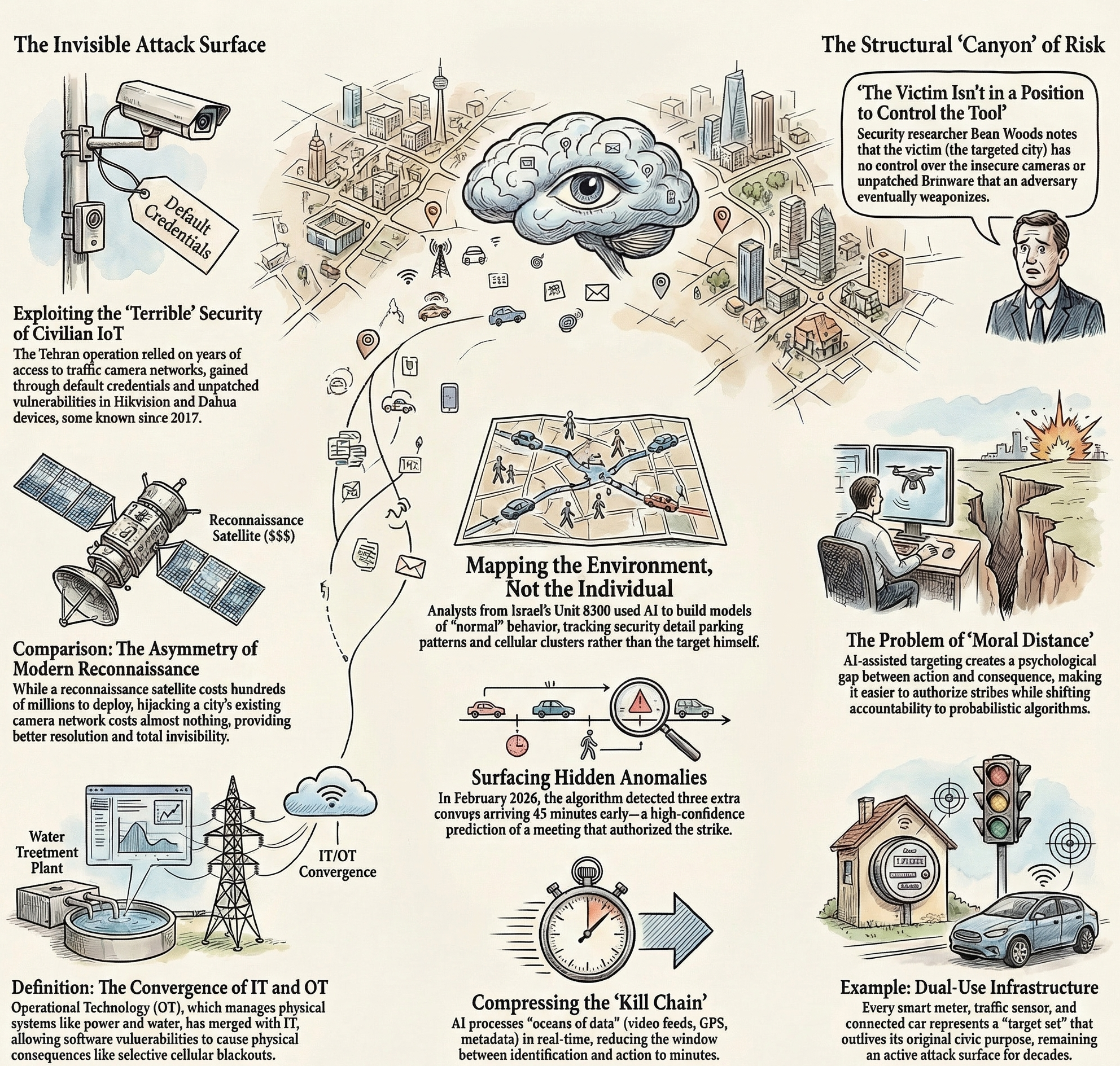

Understanding what happened, and more importantly what it means for the rest of us, requires moving past the geopolitical headlines and looking closely at three interlocking problems: the catastrophic insecurity of civilian IoT and OT infrastructure; the weaponisation of artificial intelligence for lethal targeting; and the ethical abyss that opens up when these two technologies converge on a battlefield that no one voted to build.

The invisible attack surface hiding in plain sight

The operation that led to the killing of Iran’s supreme leader Ali Khamenei on February 28, 2026, was months, possibly years, in the making. Reporting and a detailed analysis by Check Point Research indicate that Israeli intelligence, working in coordination with the CIA, had achieved something that sounds like the plot of a techno-thriller but is, in fact, a textbook application of well-understood offensive cyber techniques: they had gained access to nearly all of Tehran’s traffic camera network, and had been quietly mirroring its video feed to servers thousands of kilometres away for an extended period before any missile was fired.

The way they did it matters enormously. It was not accomplished through some classified exploit known only to the world’s most elite signals intelligence units. Rather, it succeeded largely because the systems protecting civilian urban infrastructure are, to put it plainly, terrible.

IoT devices, the broad category that includes everything from smart streetlights to networked CCTV cameras, share a set of structural weaknesses that the security industry has been warning about for well over a decade. Default credentials that are never changed by the administrators who install them. Firmware that ships with known vulnerabilities and receives patches that are never applied, either because the update process is cumbersome or because the organisation responsible simply does not have the resources or the culture to maintain it. Flat network architectures where compromising one device opens the door to an entire connected system. And, critically, the fact that these devices often connect directly to centralised data aggregation servers, which become enormously high-value targets once an attacker has a foothold anywhere in the network.

Check Point’s research, published in early March 2026, documented hundreds of attempts by groups linked to Iranian intelligence to hijack consumer-grade cameras across the Middle East, exploiting five distinct vulnerabilities in Hikvision and Dahua devices. None of those vulnerabilities were sophisticated. All of them had been patched in previous software updates. Some had been publicly known since 2017. The attacks worked because the cameras had never been updated, because their owners had no idea they were exposed, and because the manufacturers had long since moved on to selling the next model. As Sergey Shykevich, head of threat intelligence at Check Point, put it: “Now hacking cameras has become part of the playbook of military activity. You get direct visibility without using any expensive military means such as satellites, often with better resolution.”

The asymmetry here is striking. Deploying a reconnaissance satellite costs hundreds of millions of dollars and requires years of development. Hijacking a traffic camera installed to catch illegal U-turns costs essentially nothing, and the target’s own government paid for the installation. “The adversary’s already done the work for you,” observes Peter W. Singer, a military researcher at the New America Foundation. “They’ve placed cameras all around a city.” And unlike drones, which are detectable and only viable when air defences are minimal, a hacked camera is invisible. It sits in its mounting bracket, does exactly what it was always doing, and silently forwards a copy of everything it sees to whoever is watching.

The OT dimension of this is equally important and even less discussed. Operational technology, the industrial control systems that manage physical infrastructure like power grids, water treatment plants, traffic management systems and telecommunications networks, has been converging with standard IT networks for years, driven by efficiency and the promise of remote management. That convergence has created environments where a software vulnerability in a vendor’s update server can translate directly into physical consequences: a factory line that stops, a power grid that goes dark, a cellular tower that goes offline on demand. The reported jamming and selective shutdown of mobile towers around Khamenei’s compound in the minutes before the strike was not a physically destructive act in itself. It was a cyber-physical operation, one that used access to network infrastructure to create a window of silence in which a kinetic attack could land without warning. The OT layer of a city, the systems that make it actually function in the physical world, had become part of the kill chain.

Pattern of life: teaching an algorithm to predict a human target

Having access to a city’s camera network is one thing. Doing something useful with thousands of hours of low-resolution traffic footage from a metropolis of eight million people is an entirely different challenge. The human analysts who traditionally work in signals intelligence and surveillance are extraordinarily skilled, but they are not capable of processing that volume of data in anything approaching real time. The solution, as has become standard across modern intelligence operations, is machine learning.

The Israeli Unit 8200, the country’s signals intelligence corps, is widely regarded as one of the most technically advanced organisations of its kind in the world. The systems its analysts reportedly deployed against Tehran represent a particular application of AI that has become central to modern targeting operations: pattern-of-life analysis. The concept is straightforward even if the implementation is extraordinarily complex. Rather than attempting to locate a specific individual directly, you build a model of their environment. You track the people around them, the vehicles they use, the times at which specific activities occur. You cross-reference video data with cellular metadata, GPS signals, and human intelligence from sources on the ground. Over time, the model learns what normal looks like, and it becomes sensitive to anomalies.

In the case of the operation against Khamenei, reporting suggests that analysts were not primarily tracking the supreme leader himself. They were watching the parking patterns of his security detail on the streets near his compound in Tehran’s Pasteur Street area. A camera that had been installed to monitor traffic flow and issue fines to drivers had, over months of observation, produced a detailed map of the habits of the people whose job was to keep Khamenei alive. When three extra convoys appeared forty-five minutes earlier than usual, and when the cellular signals of senior commanders clustered in the same cell tower coverage area, the algorithm produced a high-confidence prediction that a significant meeting was taking place. That prediction, confirmed by a CIA source on the ground, was sufficient to authorise the strike.

This is what the automation of targeting actually looks like. It is not a robot deciding who to kill. It is a system that processes an ocean of data and surfaces patterns that would be invisible to human observation alone, compressing the time between identification and action to a window measured in minutes rather than hours or days. The speed matters, because a target that remains in a confirmed location for sixty seconds is a viable target in a way that one whose movements are uncertain is not.

The AI involved here is not especially exotic. The underlying techniques, convolutional neural networks for video analysis, graph analysis for social network mapping of personnel, anomaly detection on time-series data, are taught in graduate computer science programmes and have been in industrial use for years. What makes them dangerous in a military context is not their novelty but their scale and their integration. When you combine real-time access to hundreds of cameras with the ability to cross-correlate that data against intercepted communications metadata and ground-level intelligence, you get a system that can maintain persistent surveillance over an entire city and flag targets of interest with a reliability that manual analysis could never match.

Beau Woods, a security researcher and former adviser to the US Cybersecurity and Infrastructure Security Agency, has identified a structural problem that makes the civilian dimension of this particularly troubling. “The manufacturer of the device and the owner of the device are not the victim,” he notes. “So the victim isn’t in a position to control the tool that’s used by the adversary.” The chain of consequence runs from a camera installer who never changed the default password, through a vendor who stopped pushing firmware updates, to an intelligence analyst in a foreign country who is now watching the street outside a world leader’s residence. No single actor in that chain intended to build a targeting system. Together, they did.

Cyber as the first mover, and the shape of full-spectrum warfare

What makes the Iran operation unprecedented is not any single technique. Camera hacking, cellular network interference, cyber-attacks on radar and air-defence systems, all of these have precedents. What is new is the degree to which they were integrated into a single, coordinated operation in which the digital and physical components were inseparable.

A Unit 42 threat brief and statements from US defence officials described how the operation had been enabled by months and in some cases years of preparatory work, building what was called the “target set.” The cyber components were described as the “first movers,” disrupting Iran’s ability to see, communicate, and respond before any aircraft entered Iranian airspace. US defence officials said members of the Iranian military were unable to coordinate and respond effectively.

Ukraine has provided a prolonged real-world laboratory for these same techniques. Russian forces hacked security cameras in Kyiv to observe Ukrainian air defences and infrastructure targets, prompting Ukrainian intelligence to attempt to disable tens of thousands of internet-connected cameras that could be exploited for reconnaissance. Ukrainian hackers, for their part, reportedly hijacked Russian cameras to monitor troop movements and the transport of military equipment across the Kerch Bridge. The tactic has become so normalised that security researchers tracking the Iran conflict were unsurprised to find it in use on both sides almost immediately after hostilities escalated.

The feedback loops that emerge from this kind of warfare are dizzying. After Khamenei’s death, Iran imposed a severe internet blackout, cutting off its population from communications at precisely the moment when people most needed to reach their families and understand what was happening around them. According to human rights organisations, the blackout contributed to a civilian death toll that exceeded 1,100 in its first days. Simultaneously, reports indicated Iranian state-linked hacking groups used commercial satellite terminals to maintain communications and continue launching cyberattacks against Western targets, circumventing the very blackout their government had imposed on ordinary citizens. The regime was using network disruption as a weapon against its own population while using commercial satellite networks to keep some offensive cyber operations running. The absurdity of that situation tells you something important about how contemporary warfare actually distributes its costs.

Tal Kollender, a former Israeli military cyber-defence specialist and founder of the cybersecurity platform Remedio, offered a formulation that cuts through a lot of the noise: “Cyber isn’t usually the decisive weapon on its own; it’s a force multiplier that helps shape the information environment and supports operations happening on the ground.” That is accurate, but it perhaps understates how thoroughly cyber operations have been woven into the fabric of conventional military action. The strike on Tehran’s compound did not happen despite the cyber dimension. It happened because of it.

The ethics of algorithmic war, and the world we are building

The arguments made by proponents of precision, data-driven military operations are not stupid arguments. They deserve to be engaged seriously before being challenged. The central claim is this: modern targeting technology, when it works as intended, can reduce civilian casualties dramatically compared to the area bombardments that characterised twentieth-century warfare. If intelligence systems can confirm with high confidence that a specific person is in a specific building at a specific time, the alternative to a precision strike is not no strike. It is a wider strike, or a ground invasion, or sustained aerial bombardment. The comparison is not between AI-assisted targeting and a peaceful world. It is between AI-assisted targeting and the methods that flattened Dresden, or levelled Fallujah.

This argument has genuine force. But it carries hidden assumptions that erode quickly under examination.

The first assumption is that the algorithm is accurate. Pattern-of-life analysis is probabilistic, not deterministic. The systems described as producing “99% confidence” assessments are, in practice, systems trained on data that reflects the biases and gaps of the collection process. An anomaly in parking patterns that suggests a high-level meeting might just as plausibly reflect a wedding, a family medical emergency, or a shift change. The consequences of misidentification in a military context are permanent and irreversible. Mariarosaria Taddeo, professor of digital ethics and cyber security at the Oxford Internet Institute, has written extensively about precisely this risk in her book The Ethics of Artificial Intelligence in Defence: AI systems in military contexts create what she describes as a moral distance between action and consequence, a distance that makes it easier to act and harder to bear responsibility for the outcome.

The second assumption is that the capability is stable, that it belongs to responsible actors, and that it will remain so. The techniques used against Tehran are not secret. They are documented, taught, and reproducible by any state, and an increasing number of non-state actors, with sufficient technical resources. The security vulnerabilities in Hikvision and Dahua cameras that Check Point documented in March 2026 were not created for military use. They exist because nobody fixed them. The same cameras are installed in cities across Europe, North America and Asia. The same cellular infrastructure is in use everywhere. The same AI models for pattern-of-life analysis are commercially available and being integrated into urban management systems as legitimate smart-city technology. The capability gap between a superpower intelligence operation and a well-funded terrorist organisation, or an authoritarian government targeting its own dissidents, is not as large as we might hope.

The third assumption, perhaps the most dangerous, is that the ethical and legal framework governing these operations keeps pace with their technical development. It clearly does not. Dr Louise Marie Hurel from the Royal United Services Institute has made the argument that this war represents an opportunity, not a comfortable one, but a real one, for a more public debate about cyber operations as integral components of military campaigns. “If cyber is openly acknowledged as integral to the strike package,” she has written, “it can help sharpen the questions about the laws of armed conflict, proportionality, and what counts as a use of force.” Right now, those questions are being answered by the people carrying out the operations, which is not how the laws of war are supposed to work.

There is also a question that goes beyond the battlefield entirely. Every smart city initiative, every IoT deployment, every decision to connect physical infrastructure to an internet-accessible network creates an attack surface that outlives its original purpose. The traffic cameras in Tehran were installed for traffic management. The cellular towers were built to provide communications. The sensor networks that feed urban analytics platforms were deployed to improve the quality of urban life. None of their designers imagined them as components of a targeting system. But that is what they became, not because of some extraordinary act of malice, but because they were connected, because they were insecure, and because someone patient enough to wait had access to them.

The question for every city that is currently rolling out smart infrastructure, which is to say essentially every city on earth, is whether that deployment is accompanied by a security posture commensurate with the risk. The answer, nearly everywhere, is no. The vendor landscape for IoT devices is fragmented, poorly regulated, and dominated by cost pressures that consistently deprioritise security. Software update cycles are inconsistent. Network segmentation is rare. Incident response planning for smart-city components barely exists. The gap between the sophistication of the attacks that are now demonstrably possible and the defences that most urban infrastructure operators have in place is not a gap. It is a canyon.

Living in the target

There is a final dimension to all of this that tends to get lost in the operational detail, and it is the one that matters most for ordinary people who are not military planners, intelligence analysts, or policymakers. The infrastructure that was weaponised against Tehran is functionally identical to the infrastructure that surrounds us. The cameras above our intersections. The cellular networks our phones connect to. The sensors in the bus we take to work. The smart meters on our homes. Modern cars that continuously transmit location and diagnostic data to manufacturer cloud servers. The ambient digital environment of a connected city is, from a purely technical standpoint, the same kind of environment that intelligence services spent years systematically compromising in Iran.

That does not mean that Western cities are under equivalent surveillance, or that citizens of democracies face the same risks as those living under authoritarian regimes. It does mean that the technical capability to conduct that kind of surveillance exists, is being actively refined, and is not exclusive to any particular set of actors. Security researchers have repeatedly demonstrated that consumer-grade cameras, smart building systems, and industrial control devices accessible from the internet can be compromised by attackers with modest skills and freely available tools. The barriers are not technical. They are economic and organisational, and they are not holding.

What happened in Tehran deserves to be understood as more than a geopolitical event. It is a proof of concept, and a deeply unsettling one, for a world in which the boundary between the digital and the physical has effectively ceased to exist. The question of how we build and defend urban infrastructure going forward is not a niche concern for security professionals. It is a question about what kind of cities we want to live in, who controls the systems that make them function, and whether the convenience of connectivity is worth the risks that come with it.

The answer to that last question may turn out to be yes, on balance. But it should be a choice made with full awareness of what those risks actually are, not an assumption inherited from a decade ago when the threat model was still theoretical. It is not theoretical anymore.