All code is sorcery, until it isn't

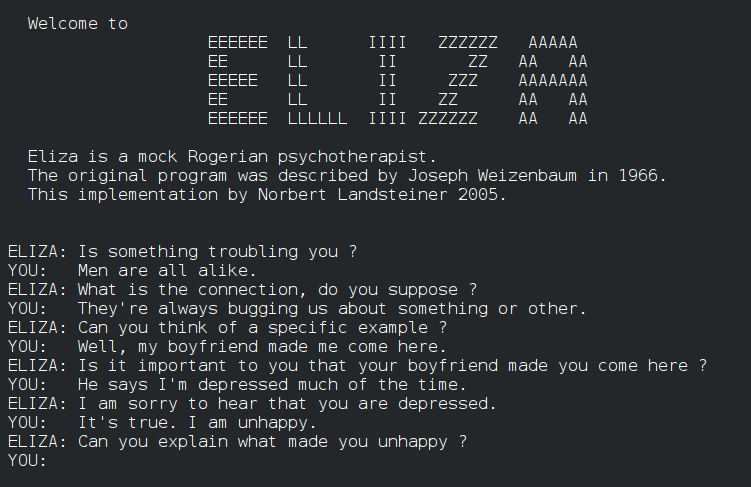

In 1966, Joseph Weizenbaum created a program called ELIZA. It was, by any technical measure, trivial: a pattern-matching engine that reflected the user’s words back as open-ended questions, mimicking the style of a Rogerian therapist. Weizenbaum expected it to be a curious demonstration. What he got instead was a lesson in human psychology that he spent the rest of his life trying to explain. His own secretary, after a few minutes with the program, asked him to leave the room so she could speak to it in private. Users formed attachments. They attributed understanding, empathy, even wisdom to a mechanism that had none. Weizenbaum was horrified, and he said so, loudly, for decades.

Sixty years later, people are having emotional conversations with large language models, grieving when their AI companion is updated or discontinued, and arguing about whether these systems deserve moral consideration. The phenomenon Weizenbaum identified has not evolved. It has simply found more powerful hardware to run on.

ELIZA and the original illusion

The ELIZA effect, as it came to be known, is not a product of naivety or low intelligence. It is a feature of human cognition. We are, as a species, extraordinarily good at detecting patterns and extraordinarily inclined to interpret any pattern that resembles responsive behaviour as the sign of an intentional agent. This tendency served our ancestors well in environments where overestimating agency in a rustling bush was less costly than underestimating it. In an environment populated by sophisticated conversational software, it becomes a liability.

What makes Weizenbaum’s case particularly striking is that the illusion persisted even in people who understood perfectly well how the program worked. Knowing the mechanism did not dissolve the effect. This is important to keep in mind, because a great deal of the contemporary debate about AI treats the perception of machine intelligence as something that can be dispelled simply by explaining the statistics. It cannot. The response is not intellectual, it is pre-intellectual, lodged at a level of cognition that reasoning alone does not reach.

Weizenbaum spent the years after ELIZA writing Computer Power and Human Reason (1976), which remains one of the most lucid accounts of what computers can and cannot do, and more importantly, what they should and should not be asked to do. He argued that certain kinds of decisions, those requiring genuine understanding, genuine empathy, genuine moral judgment, ought never to be delegated to machines. Not because machines will necessarily get them wrong, but because the delegation itself represents a kind of abdication of human responsibility. His colleagues in computer science largely ignored him. The field was accelerating, and caution was not fashionable.

The anthropology of the programmer

To understand why the ELIZA effect runs so deep among the people who build these systems, not just among their users, it helps to look at what the experience of programming actually does to those who practice it.

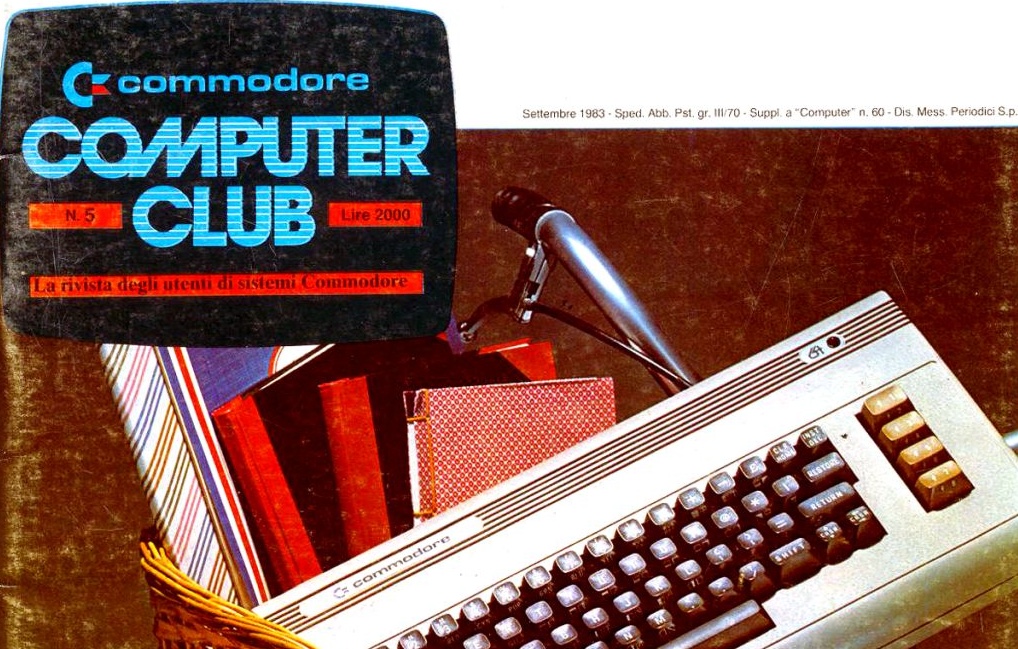

I started programming around the age of eight, on a Commodore 16. The machine came with a manual that contained BASIC listings: small programs, printed on paper, that you could type in by hand. Later, the same ritual extended to magazines, above all Commodore Computer Club, the Italian publication that was essentially a bible for home computer enthusiasts of that era. Each program began as a sequence of characters on paper, and the act of transcribing it, line by line, number by number, was your first lesson in what computers actually demand. A single wrong digit and nothing worked. Not a polite failure, not a partial result: nothing. The machine offered no negotiation.

This is still, I think, the most honest introduction to programming that exists, because it makes the fundamental relationship completely visible. The computer does not accept approximate instructions or infer intent. A misplaced character, a single missing bracket, and everything stops. The first extended encounter with this produces, in most people, something that looks remarkably like grief: denial that the error could be theirs, anger at the machine, bargaining, a low-grade depression, and eventually acceptance. The thing one accepts is not the limitation of the computer. It is the necessity of meeting the machine entirely on its own terms.

This is a genuinely transformative experience, and the transformation is not only technical. After the Commodore 16 came the Amiga, and with it a different quality of fascination. BASIC on the Amiga was still BASIC, but the machine’s capacity for graphics, sound, and multimedia gave the act of programming a new dimension. You were no longer just making numbers appear on a screen. You were generating images, synthesising music, composing small worlds that moved and sounded. The sense of being a creator, of producing something that had not existed a moment before, was qualitatively different from anything a typewritten listing could convey.

On the other side of that initiation lies the discovery at the heart of programming: the gap between “do what I say” and “do what I mean”. Machines operate exclusively in the first register. Human beings communicate almost entirely in the second. Every programming language in history has been, among other things, an attempt to narrow this gap, and the most successful have perhaps covered a few centimetres of the enormous distance between the two. Once a programmer internalises this, something changes in the way they perceive their own work. They have learned to produce, with precision, the specific words that cause matter to behave in the ways they intend. From the inside, this feels less like engineering and more like craft.

The practitioner and the craft

This is not a casual or metaphorical observation. In 1984, Harold Abelson and Gerald Jay Sussman published Structure and Interpretation of Computer Programs, the textbook for the introductory programming course at MIT. It is known in hacker culture as the “Wizard Book”, after the robed figure, pointed hat and crystal ball included, on its cover. The dedication reads: “This book is dedicated, with respect and admiration, to the spirit that lives in the computer.” These are not the words of mystical amateurs. They are the words of two of the most rigorous computer scientists of their generation, and they tell you something genuine about the way even serious practitioners relate to the act of programming. Arthur C. Clarke’s third law, that any sufficiently advanced technology is indistinguishable from magic, is usually cited with reference to the outside observer. Among practitioners, the inside view is not so different.

My own path through programming languages reinforced this at each step. Moving from BASIC to Pascal brought structure and clarity, a sense of building cathedrals rather than tents. Then came 8086 Assembly, and with it something closer to the esoteric in its purest form. Assembly is the language in which you speak directly to the processor, addressing registers, manipulating memory at the most granular level, writing instructions that map almost one-to-one to the machine’s native operations. Mastering it felt genuinely arcane. Not because it was mysterious, but because so few people were willing to learn it, and because the gap between what you wrote and what the machine did had shrunk to almost nothing. In Assembly, you were not casting a spell through an interpreter. You were writing the spell in the machine’s own tongue.

The linguistic environment of computing reinforces this at every level. Background processes in Unix and Linux are called daemons. Commands are executed. The programmer who knows the most arcane commands and their most obscure parameters occupies, in the culture of computing, a position structurally identical to that of the initiate who has mastered the most powerful spells. In the era of command-line interfaces, this was explicit: the neophyte who used only standard options was looked upon with mild contempt by the experienced, and the acquisition of esoteric knowledge was treated as a rite of passage. Graphical interfaces, Windows with its layers of menus, and later Linux with its terminal culture, changed the surface but not the substance. The capacity to navigate the labyrinth still marks the difference, inside the culture, between the adept and the profane.

The compulsive programmer and the seduction of inner worlds

Weizenbaum identified a second pathology alongside the ELIZA effect, one that does not require a conversational interface to manifest. He called it the compulsive programmer: the person for whom working at the computer has become an end in itself, driven by something closer to compulsion than to purpose. The world inside the machine, with its precise rules and manageable complexity, becomes a refuge from the rougher, less controllable complexity of the world outside. What the software actually does for real people, the consequences it has out there, becomes secondary, or simply irrelevant.

I recognise this pull from my own experience. Moving through Visual Basic on Windows, then C and C++ on Linux, and eventually into enterprise environments where the work involved RPG, COBOL, Perl, and Python, I observed how each transition brought its own gravity. Each language had its idioms, its elegance in certain domains, its ways of rewarding deep familiarity. The temptation was always there to go deeper into the tool rather than forward toward the result, to refactor something already working because the new version would be cleaner, to explore a feature that served the pleasure of the programmer rather than the need of the user. The inner world of the machine is always more tractable than the outer world it is supposed to serve, and this is precisely what makes it so seductive.

This is recognisable to anyone who has spent serious time in engineering teams. It appears as the unmotivated refactoring of working software, battle-tested for years or decades, rewritten from scratch in the fashionable language or framework of the season, not because the old version had concrete problems, but because the programmer wants to master the new tool. It appears as months spent on architectural elegance in systems that users will never directly see, while features people actually need remain unbuilt. There is a reason the explosion of role-playing games like Dungeons & Dragons happened almost simultaneously with the arrival of personal computers, and involved substantially the same population. Both offer systems for the production of alternative worlds governed by learnable, masterable rules, worlds where expertise confers power and where the unpredictability of ordinary life can be held at bay. The appeal is genuine, and in moderate doses it is productive. The problem arises when the inner world becomes more real than the outer one, and when the fantasy of what the machine can do displaces the discipline of asking what it actually does.

The moment the spell seemed to come true

Into this cultural landscape, already saturated with the latent perception of computing as a magical practice, arrived large language models. They appeared at a moment when the gap between “do what I say” and “do what I mean” had been the central unsolved problem of human-computer interaction for seven decades, and they appeared, to many people, to solve it, or at least to perform a very convincing simulation of solving it.

There is something almost vertiginous, thinking back to those evenings in front of a Commodore 16, copying BASIC listings character by character from a magazine, in the contrast with what is possible today. The concept of vibe coding, popularised in early 2025 in discussions that included Andrej Karpathy, former Director of AI at Tesla and former researcher at OpenAI, describes a workflow in which the developer writes natural language descriptions of what they want and the model produces code, which the developer then reviews, adjusts, and iterates. Karpathy suggested that this approach could “terraform software” and make programming accessible far beyond the circle of trained practitioners. As a description of a shifting division of labour in software development, this is a reasonable observation.

What matters more is the phenomenological effect of the experience. When someone writes a prompt in something close to plain language and receives back something close to the result they wanted, the interaction feels, at the level of direct experience, identical to issuing a command. You speak your intention, and the world responds. The probabilistic, approximate nature of what is happening underneath is invisible behind an interface that feels conversational. The prompt, in the mythology of prompt engineering, is described as a semi-formalised instruction that produces a deterministic effect on what remains, in every meaningful sense, a pseudorandom system. The gap between “do what I mean” and “do what I say” has not been closed. It has been concealed, and the concealment is remarkably effective.

The result is that the LLM functions as the reification of the sense of ‘magic’ that every person who has worked with computers has always, at some level, suspected was at work in their own practice. This applies to experienced engineers as much as to newcomers. The operator who has spent years feeling, somewhere in the background, like an operator of words that produce effects, someone who conjures rather than merely constructs, now holds a tool that appears to confirm that intuition directly. And crucially, this is not a matter of competence or critical awareness. It is a matter of a very old illusion finding, at last, a very persuasive physical form.

From illusion to ideology

The dangerous step is not experiencing the illusion. The dangerous step is building an ideology on top of it, one in which the fantasy of what the machine can do is treated as equivalent to what it actually does, and in which the world is expected to conform to the fantasy rather than the other way around.

The contemporary technology industry has produced a specific cultural formation in which this step has been taken, loudly, and with enormous financial resources behind it. When commentators such as Dario Amodei (see https://darioamodei.com/machines-of-loving-grace) suggest that AI systems could rewrite banking software built in COBOL and validated over decades of real-world use, the proposal lands differently on someone who has actually written COBOL in production environments. COBOL running in banks and insurance companies is not legacy code in the pejorative sense. It is code that has been tested against edge cases that the people proposing to replace it cannot even enumerate, encoding business rules accumulated over generations of incremental adjustment. The confidence with which its replacement is announced is the confidence of someone who has never had to maintain it. And when Microsoft discusses using Rust and AI assistance for parts of Windows or its tooling (see https://github.com/microsoft/windows-rs), what one hears is not an engineering roadmap but an expression of a worldview in which the excitement of a new tool outweighs any concern for the consequences of deploying it on systems that billions of real people depend on.

This pattern runs through the dominant narratives of digital culture over the past two decades with remarkable consistency. Social networks that promised to redefine human connection produced, in practice, surveillance capitalism, political polarisation, and a global infrastructure for engineered anxiety. Platforms promising to democratise finance, information, and expertise concentrated power while spreading its visual language. The rhetoric has always been about transformation, about the machine finally doing what the visionary always knew it could. The gap between promise and result has been systematically ignored, because acknowledging it would require stepping outside the fantasy.

It would be a mistake to explain all of this as pure cynicism or deliberate fraud. Some of it is. But much of it reflects something structurally more interesting: the genuine psychological dynamic that Weizenbaum spent his career documenting, scaled from the individual programmer to the level of institutional culture. People who have built their entire professional identity around the perception of technology as a magical instrument, capable of transforming intention directly into effect, are not well placed to critically evaluate claims made in the same register. That intuition feels real because, in their experience, it has always felt real. The difference now is that there are sufficient resources and sufficient cultural authority behind the feeling to impose its consequences on everyone else.

What resistance actually looks like

Weizenbaum was not a Luddite. He used computers, he taught programming, and he understood very well what they were capable of. His argument was not against technology but against the abdication of judgment in its presence. Being exposed to a powerful illusion does not require surrendering to it. It is possible to use a language model, and genuinely benefit from doing so, without believing it understands anything. It is possible to work with AI-assisted tools without concluding that the nature of software development has been fundamentally transformed. It is possible to recognise the psychological pull of the magic metaphor and still insist on measuring systems by their actual effects on actual people.

Decades of programming, across languages and paradigms and contexts, from transcribing BASIC on an 8-bit machine to writing production code in enterprise systems, give a very specific kind of perspective on these claims. Each layer of abstraction, each new language, each new paradigm, felt at the time like a qualitative leap, a new kind of power. And each time, the fundamentals reasserted themselves: the machine still did only what you told it to do, the complexity still had to go somewhere, and the consequences of getting it wrong still fell on real people who had not been consulted about the experiment. The history of computing is full of tools that felt magical and turned out to be merely useful, or merely dangerous, or both.

This requires a specific kind of discipline, the same one that learning to program originally demanded: the willingness to look past the surface of the experience, to ask what is actually happening rather than what it feels like is happening, and to resist the comfortable certainty that because it feels real, it must be. That discipline is not glamorous. It does not produce keynote speeches or attract investment rounds. But it is the only foundation on which technology can be honestly evaluated, and when the time comes to assess what this current wave of enthusiasm has actually produced, it will be the people who maintained it who are in a position to say something true.

Weizenbaum’s secretary wanted to speak to ELIZA alone. Sixty years later, the machine has gotten considerably better at the conversation. The impulse has not changed at all.