Reading the ENISA secure by design playbook without the hype

There is a telling sentence buried deep inside the new ENISA Secure by Design and Default Playbook, published in March 2026 for public consultation: “security goals can often fail, even in the presence of good design, if there is a lack of tools that enable stakeholders to understand and assess security issues.” The observation sounds almost obvious, yet it captures exactly the failure mode that security architects encounter every day: good intentions on paper, incomplete execution in practice.

The document is 70 pages of dense, practical guidance aimed at SME manufacturers of products with digital elements, but its content is far more broadly useful than that target audience suggests. For anyone who has to translate security principles into real architectural decisions, the playbook is one of the most operationally useful things ENISA has published in years. It is worth reading carefully — and questioning with equal rigour.

Why this document matters right now

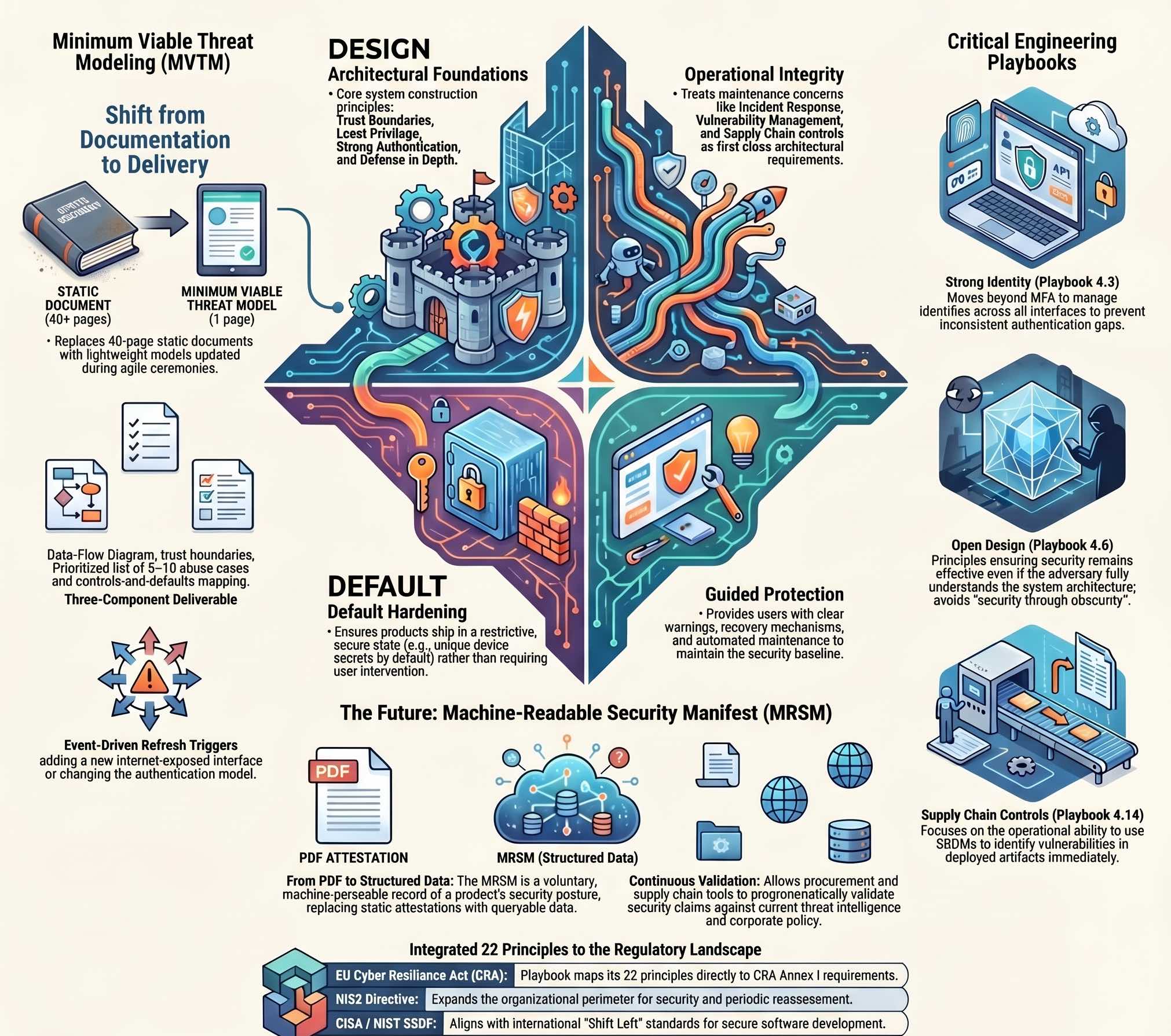

The EU Cyber Resilience Act (CRA) has been in force since 2024 and its transition periods are running out. Manufacturers of connected products, from consumer IoT to enterprise appliances to embedded systems, are now facing legally binding essential requirements on product security. The playbook explicitly maps its 22 principles to the CRA’s Annex I requirements, giving product teams a concrete bridge from regulatory obligation to engineering practice.

At the same time, NIS2 has expanded the perimeter of who has to take security seriously at the organizational level, and the ENISA AI Act guidance is starting to create pressure on how security must be embedded into AI-enabled products. The days of bolting on a privacy statement and shipping are over; the European regulatory landscape has shifted permanently.

The playbook sits at the intersection of these pressures. It does not tell you how to comply with the CRA, which is a legal question, but it shows you what technically defensible implementation of secure-by-design principles looks like across a full product lifecycle. For a security architect, that distinction matters a lot: as I argued in a previous piece on the confidence gap in corporate cybersecurity, compliance is a floor, not a ceiling, and most organizations are better at satisfying the former than building towards the latter. The playbook is built for people who want to build something actually secure, not just something that passes a checklist.

The architecture of the playbook itself

The document organizes 22 principles into four groups, and understanding this taxonomy is the first thing to internalize. Security by Design principles split into Architectural Foundations (how the system is built) and Operational Integrity (how it is managed and maintained). Security by Default principles split into Default Hardening (products ship in a secure, restrictive state) and Guided Protection (users are supported in maintaining the baseline through clear warnings and recovery mechanisms).

This four-quadrant structure is actually quite elegant because it forces a separation of concerns that most teams conflate. “We have MFA” is a Security by Design concern. “MFA is on by default and you have to actively disable it” is a Security by Default concern. The two are related but distinct, and conflating them is how you end up with products that support strong authentication but ship with it turned off, which is still the majority of connected devices on the market.

The Architectural Foundations cluster contains the principles you would expect from any serious secure design framework: trust boundaries and threat modelling, least privilege, strong identity and authentication architecture, attack surface minimisation, defence in depth, and open design (the principle that security must not rely on obscurity). What makes the playbook version of these useful is not novelty but operationalization: each principle comes with a concrete objective, a list of practical engineering actions, a set of evidence items that demonstrate implementation, and criteria for a release gate review. This approach is not far from the philosophy behind the NIST Secure Software Development Framework (SSDF, SP 800-218), which organizes similar expectations into four practice groups (Prepare, Protect, Produce, Respond), published in its current version 1.1 in February 2022.

The Operational Integrity cluster is where things get interesting for architects working on longer-lived systems. Secure coding practices, logging and monitoring, configuration and change management, incident response and recovery, vulnerability and patch management, and supply chain controls are all treated as first-class architectural concerns, not operational afterthoughts. The fact that “incident response and recovery” appears as a design principle, rather than just a process, reflects a genuinely mature view of what it means to build something resilient. It is consistent with what the CISA Secure by Design joint guidance, co-signed by 17 international partners, has been advocating since its first publication in 2023: moving responsibility for security outcomes back to the manufacturer, away from the end user.

Threat modelling the ENISA way

One of the strongest sections in the playbook covers threat modelling, and it deserves attention because it represents a deliberate departure from the “let’s run STRIDE and produce a 40-page document nobody reads” school of thought.

The playbook advocates for a minimum viable threat model: fast to produce, easy to refresh, and tightly coupled to delivery. The actual deliverable is not a comprehensive STRIDE decomposition but a data-flow diagram with trust boundaries annotated, a short list of five to ten prioritized abuse cases, and a controls-and-defaults mapping for each. The whole thing can fit in a wiki page. This approach extends what I described in the context of personal threat modelling, where the same asset-threat-risk structure applies, but now pushed into the engineering process itself rather than treated as a security team exercise.

This is not simplification; it acknowledges something that seasoned architects already know: a threat model that nobody updates is worse than no threat model, because it creates false confidence. The playbook explicitly names the anti-patterns to avoid, including treating threat modelling as a one-off compliance exercise, over-engineering models that never influence actual design decisions, and failing to revisit the model after substantial changes. These are exactly the failure modes that make threat modelling a box-ticking activity in most organizations.

The model also specifies refresh triggers: events that require re-running the threat model. A new internet-exposed interface, a change in the authentication model, a new critical dependency, a major architecture change. This framing treats threat modelling as a living activity embedded in the development process rather than a point-in-time artifact, which aligns naturally with what NIST calls “responding to vulnerabilities” in the SSDF, and with the event-driven review logic that NIS2 imposes on organizations through its periodic reassessment requirements.

For architects operating in agile or DevSecOps environments, the playbook’s recommendation to introduce “fast security gates aligned to existing agile ceremonies” is practical and implementable. This captures the original intent behind shift-left security: threat model scope review as part of sprint planning, lightweight abuse-case review as part of pull request checks, release gate validation as part of Definition of Done. None of this requires a dedicated security team sitting outside the development process.

The 22 playbooks: reading between the lines

The core of the document is the set of 22 individual playbooks in Section 4, each covering one principle. Reading through them as an architect, a few stand out as particularly well-executed or particularly revealing about where the industry’s gaps actually are.

Playbook 4.3 (Strong identity and auth architecture) goes beyond “implement MFA” to address the architectural question of how identities are created, verified, and managed across all interfaces: web portals, APIs, local management consoles, and device-to-cloud communication. This is the level at which identity problems actually live. As I explored in detail in the intersection of PAM and Zero Trust, most breaches do not exploit weak authentication: they exploit inconsistent authentication, where the main web interface has MFA but the API endpoint behind it does not, or the local management console has an entirely separate credential store with no monitoring. The playbook’s framing of identity as an architectural concern, not a configuration task, is the right one.

Playbook 4.6 (Open Design) demands careful reading precisely because it is often misunderstood. The principle is not that code must be open source; it is that security controls must remain effective even if an adversary fully understands how the system works. A system that relies on an attacker not knowing which port the admin interface is on is not using defence in depth, it is using obscurity. The parallel with LOLBins and the misuse of legitimate tools is apt: attackers regularly exploit components that are well-documented, well-understood, and present on every system by design. Defence that depends on attacker ignorance fails precisely when it matters most.

Playbook 4.14 (Supply chain controls) is brief but pointed. It addresses the verification of third-party components, including the use of SBOMs, supplier security assessments, and monitoring for newly disclosed vulnerabilities in dependencies. As I covered recently in a dedicated piece on SBOM and software supply chain maturity, the operational maturity question is not “do we have an SBOM?” but “do we know, right now, which deployed artifacts contain a component that appeared in yesterday’s CVE feed?” The playbook’s supply chain controls section sets the architectural preconditions for answering that question: defined processes for component ingestion, cryptographic signing of build artifacts, and continuous monitoring of the dependency graph. For architects building on top of open-source stacks or integrating commercial components, it pairs naturally with the defensive strategies for container image security I wrote about in February: supply chain trust is a property of the entire pipeline, not just the runtime.

Playbooks 4.15 through 4.22 cover the Security by Default principles, and several of them are directly relevant to anyone designing IoT or enterprise appliances. The principle of “unique device identity and secrets by default” (4.18) addresses the chronic industry failure of shipping devices with shared default credentials, still one of the most exploited conditions in consumer and industrial IoT, as the recent case of IoT and AI in modern conflict scenarios has shown with uncomfortable clarity. “Mandatory security onboarding” (4.19) treats the out-of-the-box experience as a security event, not a user experience event. “Automated maintenance and updates” (4.20) argues that products should ship with a functioning update mechanism that requires deliberate user action to disable, rather than optional updates that require deliberate user action to enable. These three principles alone, fully implemented, would eliminate a significant fraction of the attack surface in typical connected products.

The machine-readable security manifest: a glimpse of what comes next

Section 5 of the playbook introduces a concept that deserves more attention than it is likely to get during the public consultation: the Machine-Readable Security Manifest (MRSM). The idea is a voluntary, manufacturer-issued artifact that provides a structured, machine-parseable record of a product’s security posture, including which security claims are made, what evidence supports those claims, and how the evidence was verified.

This is not a compliance report in a different format; it reflects a different philosophy. Instead of a PDF attestation that a human auditor reviews once during procurement, the MRSM is a structured data artifact that can be ingested by automated tooling, compared across vendors, and validated against policy programmatically. The playbook provides an illustrative example schema that expresses security claims as hierarchical entries with evidence attachments and verification results. In spirit, it extends the same logic as the SBOM toward the broader security posture of a product: machine-readable, continuously updatable, and queryable against current threat intelligence.

For architects, this signals where procurement and supply chain security are heading. The question “does this product implement least privilege?” today requires reading a vendor’s security whitepaper and trusting their self-attestation. With something like MRSM, it becomes a query against a structured artifact. The tooling to automate this does not fully exist yet, but the conceptual framework is here, and the CRA’s conformity assessment requirements may provide the incentive alignment needed to make it real.

What actually changes for a security architect

Reading the playbook critically, the most valuable shift it represents is not in the content of individual principles (most of which are not new) but in the framing: secure by design is explicitly treated as an architectural responsibility, not a security team responsibility. This matters because the alternative, bolting security on after the fact, is demonstrably less effective and more expensive. As CISA’s own programme manager noted in 2025, implementing secure-by-design principles is akin to “locking the front door”: a necessary first step, but only a first step.

In practice, this means that design reviews need security gate criteria baked in from the start, not appended as a checklist at the end of the process. It means that the threat model is a living document owned by the team. It means that default configurations are a design decision with the same weight as a functional requirement, not a deployment-time detail. And it means that incident response and recovery are considered at design time: tabletop exercises and red team scenarios that test whether recovery procedures are actually feasible given the architecture, not just plausible on paper.

The playbook does not solve the hardest problem, which is organizational change — no document can. But it provides a vocabulary and a structure that make it easier to have the right conversations: with product managers about why security defaults cannot be compromised for time to market, with engineers about why the threat model needs updating when the authentication model changes, with procurement teams about why supply chain controls need to be part of vendor evaluation criteria. The CERT-EU CTI Framework published in February 2026 provides a complementary perspective from the threat intelligence side, helping translate threat knowledge into the kind of adversary assumptions that make a threat model useful rather than generic.

Architects who internalize the playbook’s framework now will be better positioned to build systems that are defensible both technically and legally when the compliance deadlines land. The document is a draft, still under public consultation, and it will evolve. The direction it sets is worth following now.

The ENISA Secure by Design and Default Playbook (v0.4 draft for public consultation) is available on the ENISA website under CC-BY 4.0. The NIST SSDF SP 800-218 and the CISA Secure by Design joint guidance are referenced companion documents.