Light Commands: hacking voice assistants via laser beam

Researchers from the University of Michigan and the University of Electro-Communications in Tokyo, demonstrated that is possible to hack smart voice assistants like Siri, Alexa and Google using a lasers beam to send them inaudible commands.

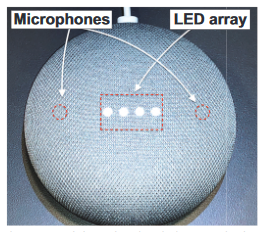

This new technique, dubbed Light Commands, exploits a design flaw in the smart assistants MEMS microphones.

MEMS (microelectro-mechanical systems) microphones convert voice commands into electrical signals, but researchers demonstrated that they can also react to laser light beams:

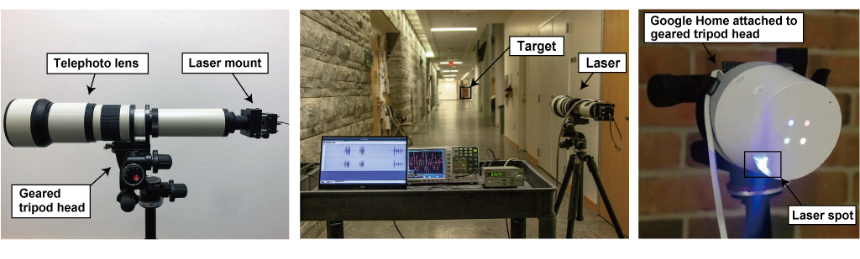

By shining the laser through the window at microphones inside smart speakers, tablets, or phones, a far away attacker can remotely send inaudible and potentially invisible commands which are then acted upon by Alexa, Portal, Google assistant or Siri.

Making things worse, once an attacker has gained control over a voice assistant, the attacker can use it to break other systems.

The research team

- Takeshi Sugawara, The University of Electro-Communications

- Benjamin Cyr, University of Michigan

- Sara Rampazzi, University of Michigan

- Daniel Genkin, University of Michigan

- Kevin Fu, University of Michigan

Which devices are vulnerable to Light Commands?

According with the technical paper, the attack has been successfully tested on the most popular voice recognition systems, such as Amazon Alexa, Apple Siri, Facebook Portal and Google Assistant.

Below a table also with the distance used during the test

Light can easily travel long distances, limiting the attacker only in the ability to focus and aim the laser beam. We have demonstrated the attack in a 110 meter hallway, which is the longest hallway available to us at the time of writing.

| Device | Voice Recognition System |

Minimun Laser Power at 30 cm [mW] |

Max Distance at 60 mW [m]* |

Max Distance at 5 mW [m]** |

| Google Home | Google Assistant | 0.5 | 50+ | 110+ |

| Google Home mini | Google Assistant | 16 | 20 | - |

| Google NEST Cam IQ | Google Assistant | 9 | 50+ | - |

| Echo Plus 1st Generation | Amazon Alexa | 2.4 | 50+ | 110+ |

| Echo Plus 2nd Generation | Amazon Alexa | 2.9 | 50+ | 50 |

| Echo | Amazon Alexa | 25 | 50+ | - |

| Echo Dot 2nd Generation | Amazon Alexa | 7 | 50+ | - |

| Echo Dot 3rd Generation | Amazon Alexa | 9 | 50+ | - |

| Echo Show 5 | Amazon Alexa | 17 | 50+ | - |

| Echo Spot | Amazon Alexa | 29 | 50+ | - |

| Facebook Portal Mini | Alexa + Portal | 18 | 5 | - |

| Fire Cube TV | Amazon Alexa | 13 | 20 | - |

| EchoBee 4 | Amazon Alexa | 1.7 | 50+ | 70 |

| iPhone XR | Siri | 21 | 10 | - |

| iPad 6th Gen | Siri | 27 | 20 | - |

| Samsung Galaxy S9 | Google Assistant | 60 | 5 | - |

| Google Pixel 2 | Google Assistant | 46 | 5 | - |

Is there a mitigation?

Countermasures include the implementation of further authentication, sensor fusion techniques or the use of a cover on top of the microphone to prevent the light hitting it:

An additional layer of authentication can be effective at somewhat mitigating the attack. Alternatively, in case the attacker cannot eavesdrop on the device's response, having the device ask the user a simple randomized question before command execution can be an effective way at preventing the attacker from obtaining successful command execution.

Manufacturers can also attempt to use sensor fusion techniques, such as acquire audio from multiple microphones. When the attacker uses a single laser, only a single microphone receives a signal while the others receive nothing. Thus, manufacturers can attempt to detect such anomalies, ignoring the injected commands.

Another approach consists in reducing the amount of light reaching the microphone's diaphragm using a barrier that physically blocks straight light beams for eliminating the line of sight to the diaphragm, or implement a non-transparent cover on top of the microphone hole for attenuating the amount of light hitting the microphone. However, we note that such physical barriers are only effective to a certain point, as an attacker can always increase the laser power in an attempt to compensate for the cover-induced attenuation or for burning through the barriers, creating a new light path.