Face ID vs. Android Face Unlock: A Security Comparison

The hardware gap that defines the comparison

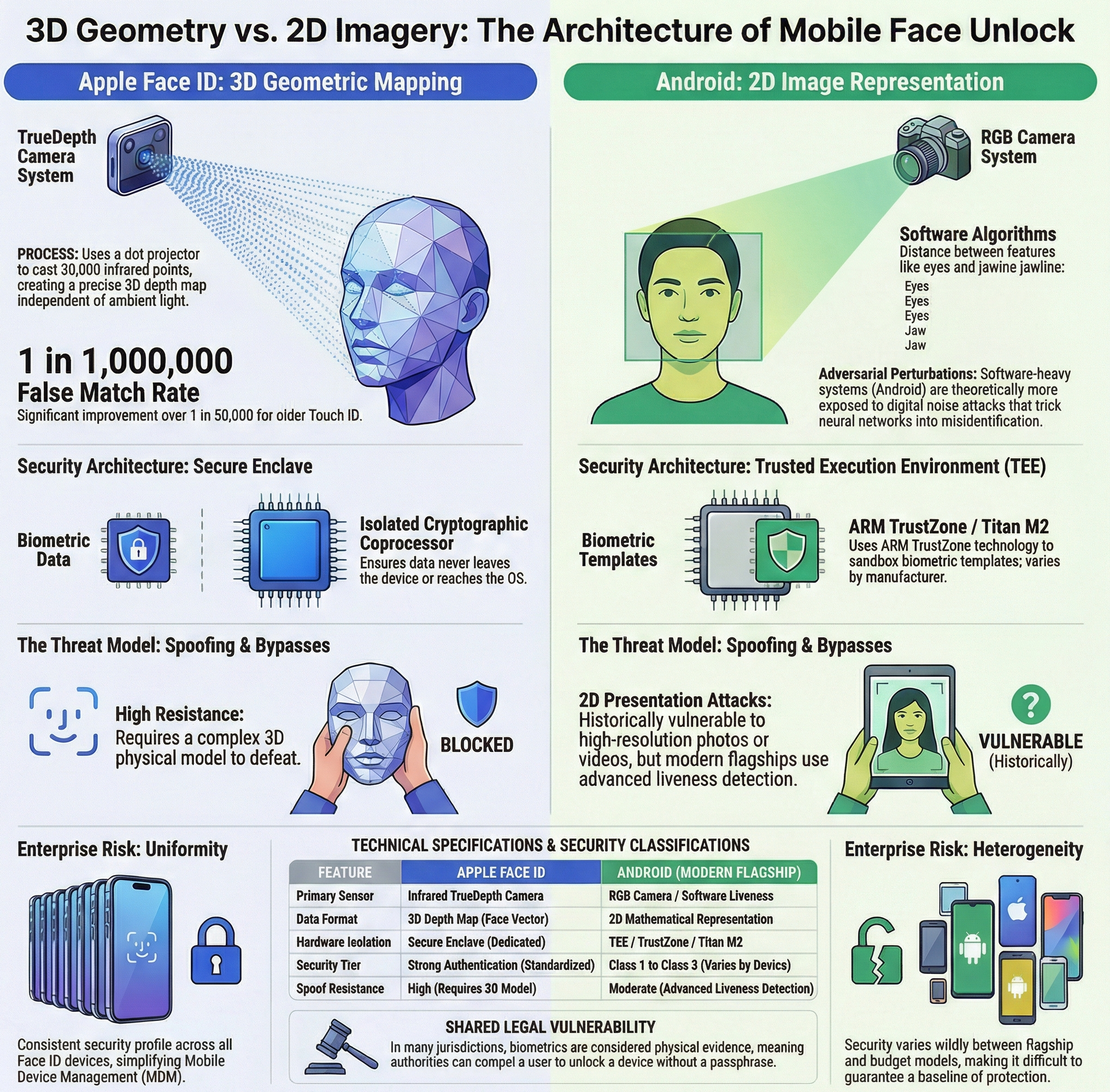

Apple built Face ID around dedicated hardware that most competitors have never replicated at scale. The TrueDepth camera system, introduced with the iPhone X in 2017 and refined across every subsequent generation, uses a dot projector, an infrared camera, and a flood illuminator to cast more than 30,000 invisible infrared points onto the user’s face. The TrueDepth system then reads the distortion of those dots to generate a precise depth map, while a separate infrared snapshot captures the resulting pattern. This is not image recognition in the conventional sense; it is a geometric measurement of the physical structure of a human face, performed in three dimensions and entirely independent of ambient light conditions.

Android manufacturers have historically taken a different path. The vast majority of Android phones, including flagship models from Samsung, OnePlus, and many others, perform facial recognition using the front-facing RGB camera. This 2D approach converts a standard photograph into a mathematical representation of facial geometry, measuring relative distances between the eyes, nose, mouth, and jawline. The process is fast and works reliably in daylight, but it is fundamentally different in its security profile. A photograph, a detailed mask, or even a video played on a second screen can, under certain conditions, defeat a purely 2D recognition system. This asymmetry in hardware capability is the root of almost every meaningful security difference between the two ecosystems.

How Face ID maps geometry into identity

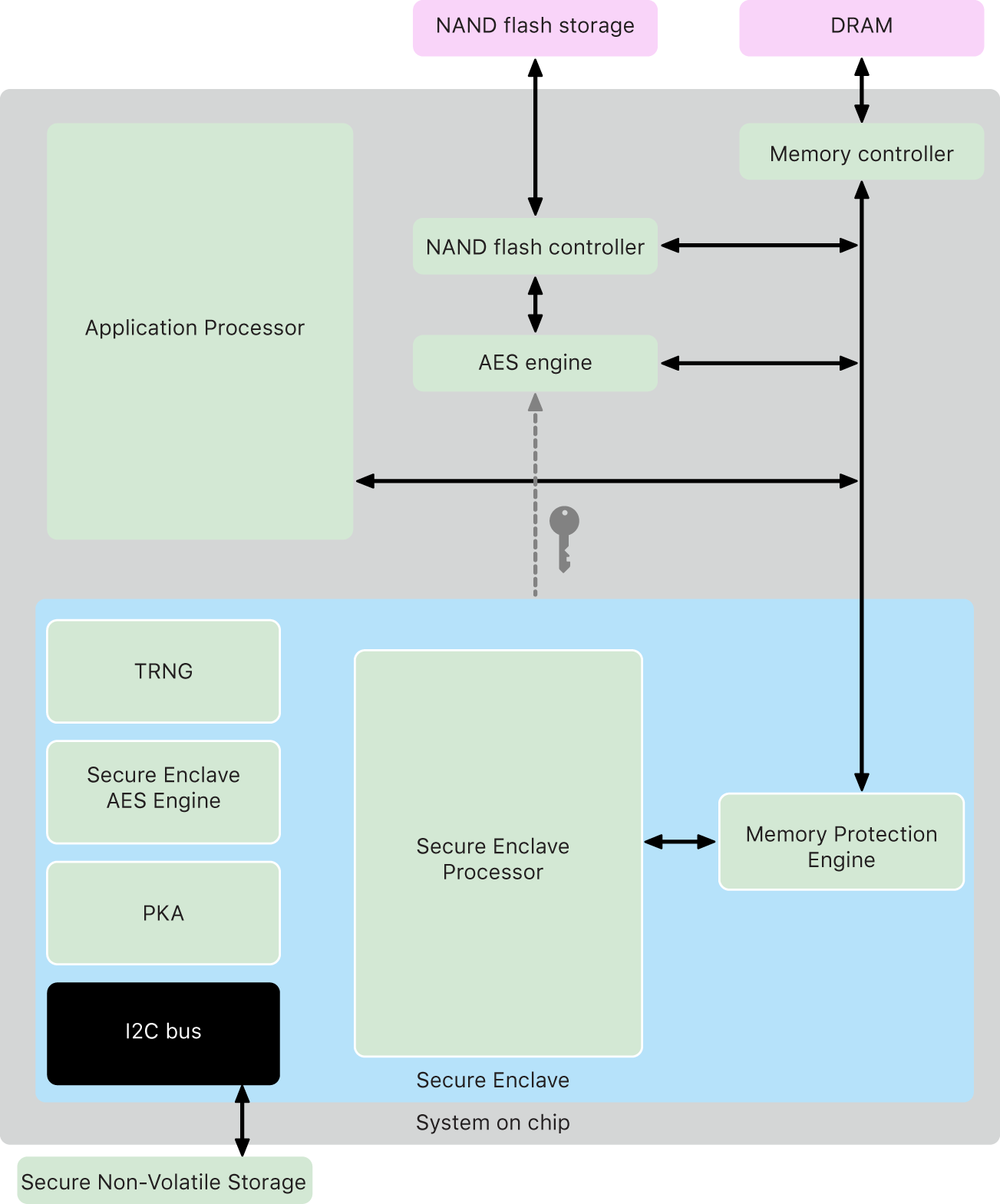

Diagram of the Secure Enclave components. Source: Apple Platform Security.

Diagram of the Secure Enclave components. Source: Apple Platform Security.

The depth map generated by Apple’s TrueDepth hardware is converted into a mathematical model, informally referred to as a face vector, which is a compact numerical representation of the three-dimensional structure of the face. This vector captures structural information that no flat image can contain. A neural network running entirely on the device compares each unlock attempt against the stored vector, producing a confidence score; if that score exceeds a defined threshold, access is granted. Critically, neither the raw depth map nor the resulting vector ever leaves the device. Both are stored and processed exclusively within the Secure Enclave, a dedicated cryptographic coprocessor physically isolated from the main application processor and inaccessible even to the operating system itself.

The Secure Enclave is not merely a software boundary. It is a dedicated subsystem within the system-on-chip (SoC) with its own boot process, its own encrypted memory, and communication channels that the main application processor cannot read. Even if the application layer were fully compromised by malware or a privilege escalation exploit, the biometric data stored in the enclave would remain unreachable. Apple reports the probability of a random individual unlocking someone else’s device with Face ID at approximately one in one million, compared to one in fifty thousand for the older Touch ID fingerprint sensor. The system also adapts over time, gradually updating the stored vector to account for natural changes in appearance such as facial hair, eyeglasses, or the slower drift of aging. This continuous learning is performed without transmitting any data externally, maintaining the privacy model alongside the security one.

Android’s approach: algorithms over dedicated optics

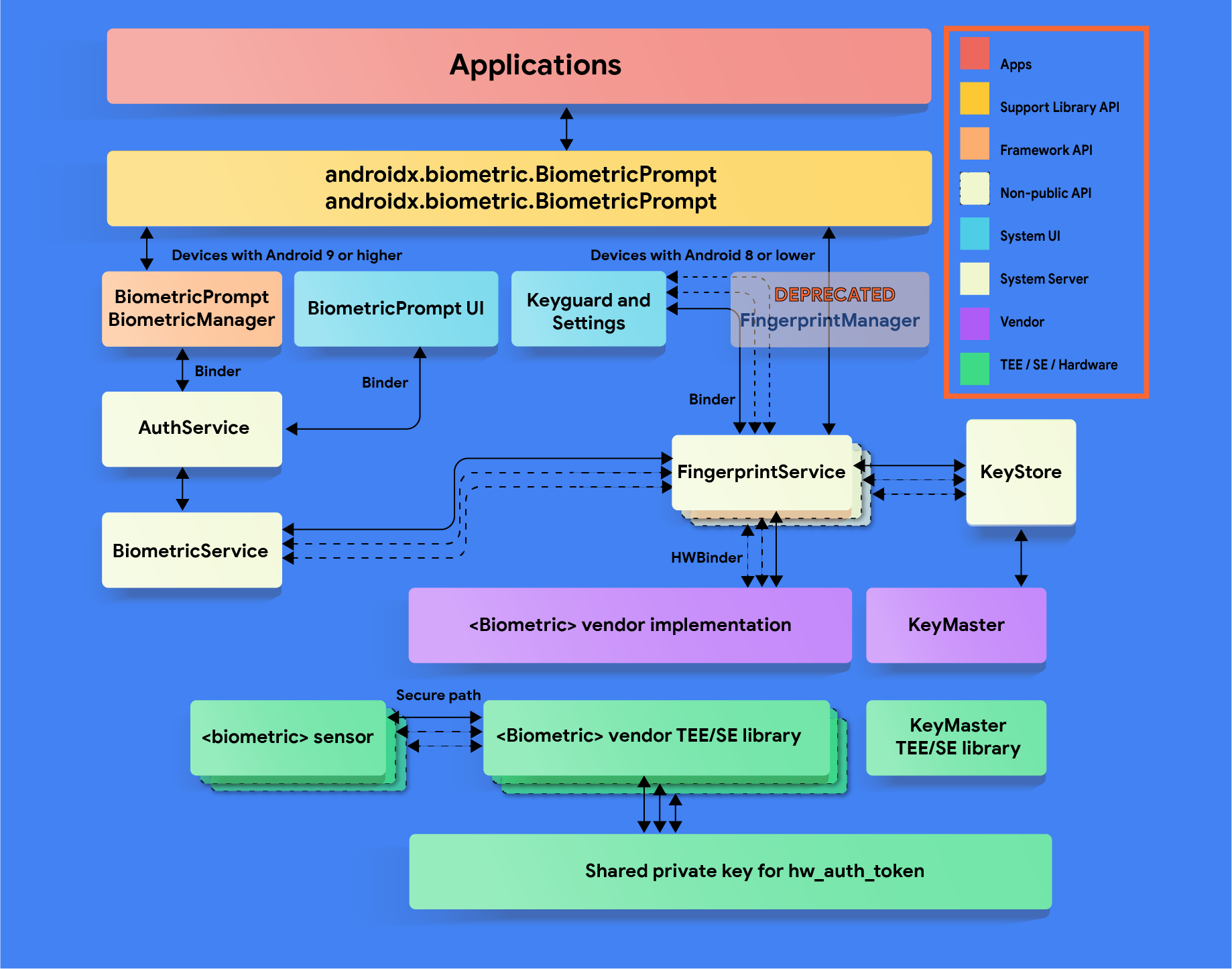

BiometricPrompt architecture and the Trusted Execution Environment stack. Source: Android Open Source Project.

BiometricPrompt architecture and the Trusted Execution Environment stack. Source: Android Open Source Project.

Google has pursued a different strategy on its recent Pixel line, and the evolution of that strategy illustrates how far software can extend the limits of biometric security without specialized hardware. After abandoning the dedicated 3D sensors of the Pixel 4, subsequent 2D face unlock implementations on Android were widely classified as a weaker biometric modality, acceptable for device unlock but not for authorizing financial transactions or accessing sensitive application data. The Android biometric security framework defines three security classes, from Class 1 (convenience only) to Class 3 (strong authentication), and for years face unlock sat below the threshold required for payment authorization or cryptographic key access.

With the Pixel 8, Google upgraded face unlock to Class 3 biometric, meaning it meets the same security bar as fingerprint authentication and can be used with the Android Keystore to protect cryptographic keys. The improvement came not from new sensors but from substantial advances in liveness detection, the algorithmic ability to distinguish a live human face from a static image or a three-dimensional replica. Modern liveness detection systems analyze micro-movements, skin texture variations, infrared reflectance patterns on devices equipped with appropriate illuminators, and temporal consistency across multiple frames captured during the brief unlock gesture. The result is a system that remains more theoretically vulnerable than Apple’s hardware-centric approach but is considerably harder to fool than earlier software-only implementations.

The storage model on Android is sandboxed but follows a different architecture. Biometric templates reside within the Trusted Execution Environment (TEE), implemented through ARM TrustZone technology, while cryptographic keys are safeguarded by a dedicated secure element like the Titan M2 chip on Google Pixel devices. The TEE provides strong isolation from the main OS, comparable in concept to Apple’s Secure Enclave, though the depth of that comparison depends on the specific implementation. The key challenge for Android is not any single device but the ecosystem as a whole: across hundreds of manufacturers and thousands of models, the quality of TEE implementation varies in ways that are difficult for end users or even administrators to evaluate without detailed technical documentation.

The threat model: spoofing, bypasses, and real-world attacks

The most operationally relevant question is not which system is theoretically stronger but which is more difficult to defeat under realistic adversarial conditions. Presentation attacks (using a physical or digital replica of the target’s face to deceive the sensor) represent the primary concern for any face-based biometric.

A 2D face unlock system is inherently vulnerable to attacks using high-resolution photographs, printed or displayed on a screen. Several older Android devices were publicly demonstrated to unlock using nothing more than an image retrieved from a social media profile, or even a video playing on another smartphone.

A popular demonstration by Unbox Therapy showing how the Samsung Galaxy S10’s 2D face unlock could be bypassed using a video.

Apple’s Face ID sets a substantially higher bar: defeating it requires a custom-crafted three-dimensional model of the target’s face with accurate depth representation and credible infrared reflectance properties, a task that is neither trivial nor inexpensive. However, researchers, such as those at the Vietnamese security firm Bkav, have successfully demonstrated proof-of-concept bypasses using highly detailed 3D-printed masks combined with 2D infrared images for the eyes.

Security researchers from Bkav demonstrating a proof-of-concept bypass of Apple’s Face ID using a specially crafted 3D mask. (Video via Reuters)

Law enforcement interaction represents a separate and often underappreciated dimension of the threat model. In documented cases across multiple jurisdictions, authorities have compelled individuals to unlock devices using biometric authentication, on the grounds that biometrics constitute physical evidence rather than testimonial disclosure protected against self-incrimination. The legal framework around this coercion varies by country, but the practical implication is that both Face ID and Android face unlock can be used to access a device without requiring the owner to disclose a passphrase. From this angle, the security difference between the two systems becomes less decisive than the shared vulnerability intrinsic to any biometric access control mechanism: the authenticator is always present and visible.

A subtler attack surface involves the neural network inference layer itself. Adversarial perturbations, carefully crafted and nearly imperceptible modifications to input data, can sometimes cause classification models to produce incorrect outputs. Since Android face unlock relies more heavily on learned feature representations, it is theoretically more exposed to this category of attack. In practice, such techniques require significant expertise and physical proximity to the target device. Apple’s reliance on raw depth measurements, rather than purely learned visual features, offers a degree of inherent resistance to adversarial input manipulation, since the depth sensor produces structured physical data that is harder to perturb than a pixel array.

What this means in practice for users and organizations

The security gap between the two systems is real, measurable, and architecturally significant. It does not, however, translate uniformly into elevated risk for every user in every context. For the vast majority of individuals using face unlock to avoid typing a PIN throughout the day, both systems provide adequate protection against casual access by strangers, opportunistic theft, or unsophisticated attackers. The adversary capable of defeating either system at scale remains confined, for now, to well-resourced state actors and highly specialized security researchers.

For organizations evaluating mobile device management policies or assessing risk for regulated industries, the distinction carries considerably more weight. Apple’s uniform hardware implementation across all Face ID devices means that the security properties of the biometric are predictable and consistent across an entire fleet. An IT administrator deploying iPhones in a financial services or healthcare environment can rely on the same depth-sensing architecture regardless of which iPhone model employees carry. Android’s heterogeneous ecosystem makes equivalent assurance difficult to provide. A Samsung Galaxy S25 and a budget device from a lesser-known manufacturer may both advertise face unlock, but the underlying implementation, from sensor quality to TEE integrity to software patch level, can differ dramatically.

The principle of least privilege suggests that sensitive operations, whether authorizing a wire transfer or accessing an encrypted credential vault, should require the strongest available authentication factor. On Apple hardware, Face ID satisfies this requirement natively and consistently. On Android, the answer depends on the specific device, the Android version, and the application’s own implementation of the BiometricPrompt API. Recent flagships from Google and Samsung running fully patched software come close to closing the gap in practical terms. Older or lower-tier devices running outdated Android versions do not. Any organizational security policy that treats Android face unlock as equivalent to Face ID without accounting for this variance is operating on an assumption that the hardware and software stack does not always support.

The face, whether read in three dimensions by a dedicated sensor or interpreted by a neural network trained on millions of images, remains among the most convenient biometric factors available on a consumer device. The technology behind it is not monolithic, and treating it as such is a security mistake. Understanding that projecting 30,000 infrared points onto a face and recognizing its photograph with a standard camera are architecturally different operations, with different attack surfaces and different failure modes, is the foundation of any informed decision about mobile authentication, whether you are choosing a phone for personal use or writing a biometric policy for an enterprise.