Ten problems every Volatility2 analyst will hit when migrating to Volatility3

After years of daily use in incident response and forensic investigations, Volatility2 becomes part of muscle memory. Commands are typed by reflex, plugin behaviour is predictable, and the toolchain rarely surprises you. Moving to Volatility3 dismantles most of those assumptions at once. The rewrite is architecturally justified and the result is genuinely superior, but the migration path is littered with specific, repeatable problems that every experienced analyst hits in roughly the same order. These are the ten that caused the most friction, with the solutions that actually resolved them.

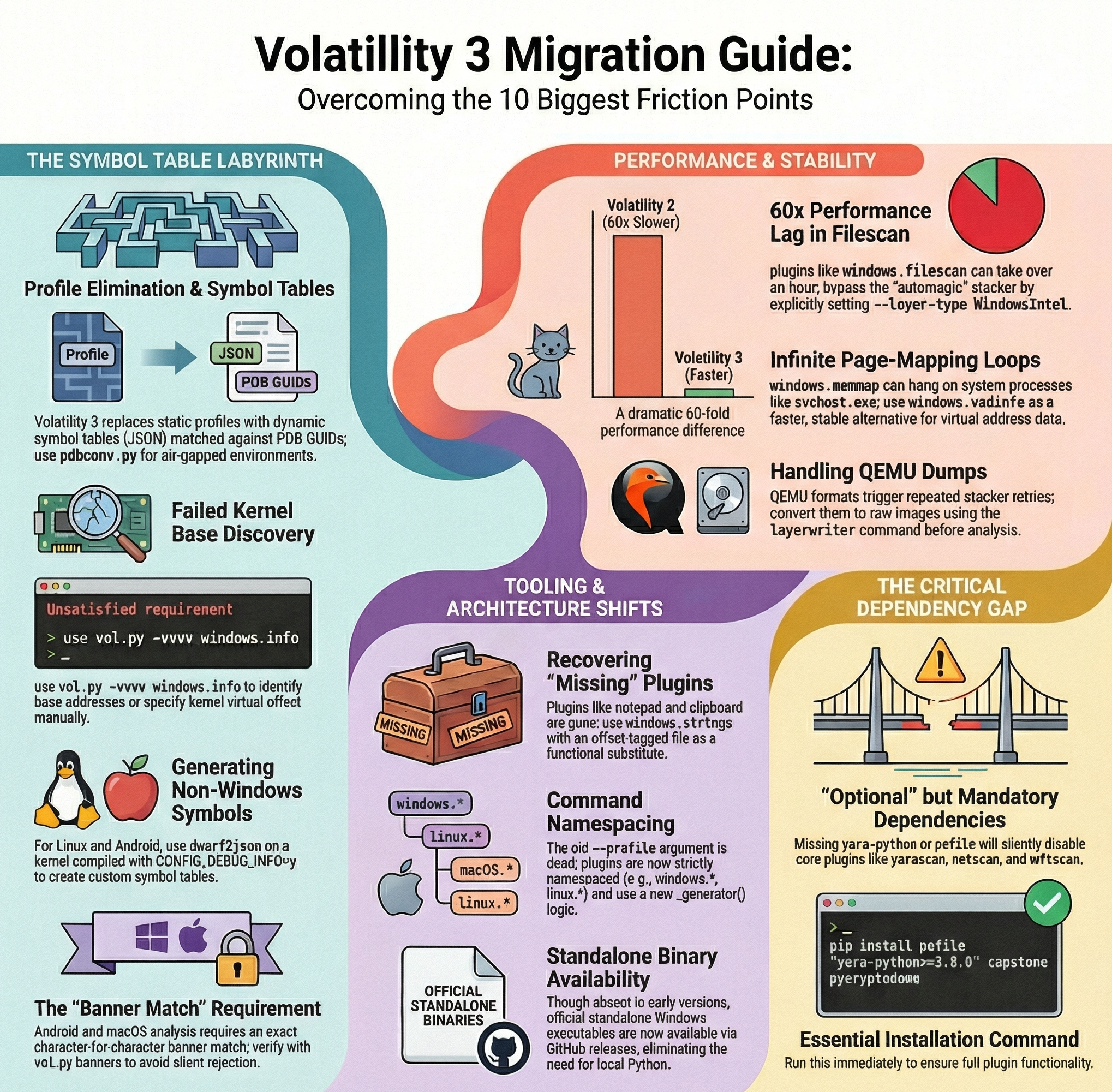

The symbol table labyrinth: Windows edition

The first and most disorienting problem is the complete elimination of

profiles. In Volatility2, a profile tied the tool to a specific OS

version and analysis proceeded with a single --profile=Win10x64_18362

argument. Volatility3 replaces this model with symbol tables, dynamically

resolved compressed JSON files matched against a PDB GUID embedded in the

memory image. In connected environments the framework contacts Microsoft’s

symbol server automatically, downloads the matching PDB, converts it, and

caches the result locally. The first run on a new kernel version is slow but

subsequent ones are instant. For air-gapped environments, the

JPCERT/CC offline usage guide

documents how to pre-populate the cache on a connected machine using

pdbconv.py and transfer the resulting files to the isolated workstation.

The second problem is subtler and harder to diagnose: the automagic PDB

scanner failing to locate the correct kernel base address, causing the familiar

Unsatisfied requirement plugins.*.nt_symbols error even with correctly placed

symbol files. Running vol.py -f image.dmp -vvvv windows.info reveals which

base addresses were attempted and whether the scanner exhausted its candidate

list. In several cases, specifying the kernel virtual offset manually through

the configuration system is the only path forward. If acquisition was performed

inside a virtualised environment, disabling hardware virtualisation in BIOS

before the next capture frequently resolves the issue at the source.

Linux, Android, and macOS: building symbols from scratch

The third problem hits anyone working outside the Windows ecosystem.

Volatility3 has no centralised symbol distribution for Linux, Android, or

macOS. Every kernel version and build configuration requires a custom-generated

symbol table produced with dwarf2json,

a Go utility that processes DWARF debug data from a vmlinux binary and a

System.map file. The kernel must have been compiled with

CONFIG_DEBUG_INFO=y. Most distribution kernels do not enable this flag in

their production builds, but major distributions (Ubuntu, Debian, Fedora, RHEL)

ship debug symbols in separate packages (linux-image-*-dbgsym on

Debian/Ubuntu, kernel-debuginfo on RHEL/Fedora) that contain the unstripped

vmlinux needed by dwarf2json. A full recompile is only necessary when no

matching debug package exists for the target kernel version.

The fourth problem compounds the difficulty for Android emulator dumps. The

kernel must be compiled from source using the exact toolchain version embedded

in /proc/version, and the resulting vmlinux must produce a banner string

that matches the memory dump character for character. A single invisible

whitespace discrepancy causes Volatility3 to reject the symbol file without

a clear explanation. Running vol.py banners on the dump before any other

command verifies the expected banner and prevents hours of misdiagnosis.

The fifth problem is specific to macOS analysis. A Kernel Debug Kit (KDK)

matching the exact OS build number must be downloaded from Apple’s developer

portal. After running dwarf2json on the kernel DWARF bundle, the

constant_data field in the resulting JSON must be manually populated with a

base64-encoded Darwin banner string extracted from the target memory image.

Forgetting this step or encoding the wrong banner produces the same

“symbol table requirement not fulfilled” error seen on other platforms, but

with no automatic resolution path available.

Performance regression: when analysis takes days

The sixth problem is one of the most surprising: dramatic, sometimes

catastrophic performance degradation on specific plugins. windows.filescan

on a typical Windows 10 image takes over an hour in Volatility3, versus

under one minute in the previous framework. The timeliner plugin, which

aggregates artefacts from dozens of sources across the entire image, has been

observed running for over a hundred hours on large dumps without completing.

The root cause is often the automagic stacker, which attempts multiple layer

detection strategies sequentially before committing to a format. Each failed

attempt carries measurable overhead. Specifying the layer type explicitly with

--layer-type WindowsIntel bypasses the guesswork and can reduce startup time

from several minutes to a few seconds on the same image. QEMU memory dumps are

the worst-affected format: the layered structure triggers repeated stacker

retries that render most plugins impractical until the dump is converted to raw

using the built-in layerwriter command

(vol.py -f dump.qemu -o output_dir layerwriter.LayerWriter).

The seventh problem is a specific pathological case: windows.memmap.Memmap

entering an infinite page-mapping loop on certain system processes such as

svchost.exe and sihost.exe. As documented in

GitHub issue #1920,

the plugin prints the table header and then consumes 100% CPU indefinitely,

producing no rows and only terminating after several days or a manual interrupt.

For affected PIDs, windows.vadinfo.VadInfo provides the virtual address

descriptor information required in most investigations and completes in a

reasonable time on the same processes that cause the hang.

The missing toolkit and the standalone binary problem

The eighth problem for practitioners migrating from Volatility2 is the

absence of several plugins they relied on regularly. notepad and clipboard

were not ported to Volatility3. Some,

like notepad, were deliberately excluded because heap structure changes in

modern Windows make the plugin fundamentally unreliable regardless of which

framework hosts it. For text content buried in process memory, windows.strings

fed with an offset-tagged string file produced by strings -o dump.mem > strings.txt

provides an imperfect but functional substitute. However, as tracked in the

long-running issue #876 in the Volatility3 repository,

the offset mapping logic still has edge cases where strings confirmed present

in dumped process memory go undetected, and the issue remains open after years

of active discussion.

The ninth problem removed the portable deployment model that was standard practice in incident response: early versions of Volatility3 shipped without a standalone Windows executable. Analysts accustomed to dropping a single binary onto a forensic workstation found themselves with no equivalent option, and the missing binary became the most-commented issue in the entire repository. Official pre-compiled executables are now distributed alongside each tagged release and downloadable directly from the GitHub releases page without requiring a local Python installation, restoring the workflow that practitioners had built their field kits around.

Architectural culture shock and broken dependencies

The tenth problem is not a single error but a systematic architectural

disruption affecting both daily usage and plugin development. The --profile=

argument is gone. Plugin names are namespaced as windows.*, linux.*, and

mac.*. The calculate() and render_text() pattern that structured every

Volatility2 plugin has been replaced by a _generator() method yielding

rows to a TreeGrid renderer. Class inheritance changed, dependency

declarations moved into a requirements() function, and short option flags

were removed entirely. The

official Volatility2 to Volatility3 migration guide

documents these changes comprehensively, but no documentation fully prepares

an analyst for the operational cost of running familiar commands against a

framework that no longer recognises them.

Woven through this architectural transition is a dependency problem that

cripples many first installations. yara-python and pefile

are listed as optional but practically mandatory for production use. Missing

yara-python silently disables yarascan, vadyarascan, and mftscan.

Missing pefile eliminates verinfo, netscan, netstat, and

skeleton_key_check at import time. Running

pip install pefile "yara-python>=3.8.0" capstone pycryptodome immediately

after the base installation closes most of these gaps. On Windows, libyara.dll

must be in the system PATH and the Python architecture must match the YARA

binary architecture exactly, a constraint that silently breaks installations

where system Python is 64-bit and the installed YARA wheel is 32-bit.

Despite all of this, the direction of travel is clear. The Volatility Foundation continues to close the feature gap with each release, and the underlying architecture of Volatility3 is genuinely better suited to modern memory analysis than its predecessor. The investment required to navigate these ten problems is real but not prohibitive, and it pays back quickly once the environment is correctly configured and the new mental model is internalised.